PhenoMeNal is a 3-year EU Horizon 2020 project (2015-2018) that will develop a standardised e-infrastructure for analysing medical metabolic phenotype data. This comprises development of standards for data exchange, pipelines, computational frameworks and resources for the processing, analysis and information-mining of the massive amount of medical molecular phenotyping and genotyping data that will be generated by metabolomics applications now entering research and clinic.

At the Spjuth research group we lead WP5; “Operation and maintenance of PhenoMeNal grid/cloud” and our aim is to provide PhenoMeNal and researchers with the capability to spawn secure Virtual Research Environments (VRE or VE) with easy access to scalable, interoperable data and tools for data analysis. These virtual environments should be able to run on most hardware architectures ranging from single laptops/workstations, to private and public cloud (IaaS) providers.

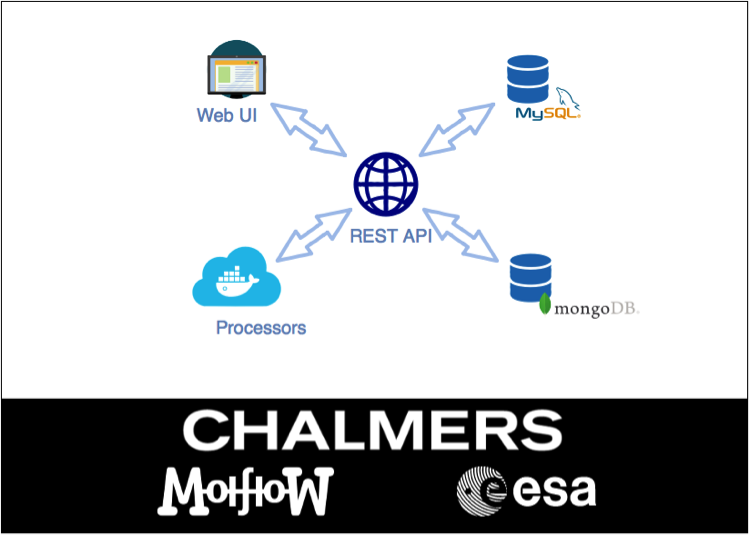

We use MANTL to set up, and to provide, a microservice-oriented virtual infrastructure. In PhenoMeNal, all partners provide tools as Docker images, , that are automatically built, tested, and pushed to DockerHub, by a continuous integration system (Jenkins). Within MANTL we provide long-running services using Marathon, including Jupyter and Galaxy workflows systems, that can orchestrate microservices-based pipelines using e.g. Chronos or Kubernetes.

So far we have successfully provisioned PhenoMeNal VRE on Google Cloud Platform, EBI Embassy Cloud (OpenStack), and SNIC Science Cloud (OpenStack). We are currently experimenting with Packer for speeding up the provisioning of virtual machines within the VRE, and Consul for federating multiple VREs. Another ongoing project is to use Apache Spark for distributed data analysis within the VRE.

Links:

http://www.farmbio.uu.se/forskning/researchgroups/pb/PhenoMeNal/

http://www.farmbio.uu.se/forskning/researchgroups/pb/Data-intensive/

http://phenomenal-h2020.eu/